I still write almost all of my code

I like to write code.

I am absolutely into that zen mode when code feels like an extension of the thought process. And that feeling when you wrote something, made it as clean as possible and take a couple of minutes to stare at that little masterpiece.

But take the latest talks from devs; many say they write code 20% of the time now and mostly approve or modify whatever AI created for them. I truly get that: for something really easy and boilerplate-like, I’d rather tell AI to do it. But not all of it for sure.

So I stick to the opposite: I do 80% of the hard work myself and delegate 20% to the LLM. Thought I’d share my workflow to reflect on that.

I’m 100% into all the trends to try out new shiny things and play around with whatever new tech comes out. I’m not an AI hater, I’m an enthusiast, and at the same time, want to do what I love with my own hands.

When do LLMs make total sense?

Here’s when AI truly shines for me.

Low-skill work / automation

Anything low-intelligence and repetitive. I think of it as I would have a little team where I’m paying people to save me some time so I can do the important stuff.

- Updating changelogs

- README updates

- Writing and updating tests

- Codemod-like tasks

- Fixing my bad English grammar (not a native speaker)

To make it work better, I created a set of skills with all the context and rules so I can just run them with slash commands. One of the key ideas is to make them as deterministic as possible.

Code review / sanity check

Whenever I’m ready to get something reviewed by real people, I ask Opus (others are not as efficient at thinking) to review the changes of my branch.

What I expect it to find:

- Silly mistakes or typos

- Forgotten code paths, like some var has an unexpected value

- Architectural antipatterns

- Security issues

These are not something I take as a source of truth, more of something to double check. I’m currently working on a fairly complicated project, and AI cannot understand it well enough, but there can always be something I missed.

Brainstorming

I’d say it’s a kind of duck method. I don’t know if that’s something I made up, but I’ve heard that some people keep a rubber duck at their desk to talk through stuff. And it surely helps. Same thing, helpful.

When do LLMs underperform?

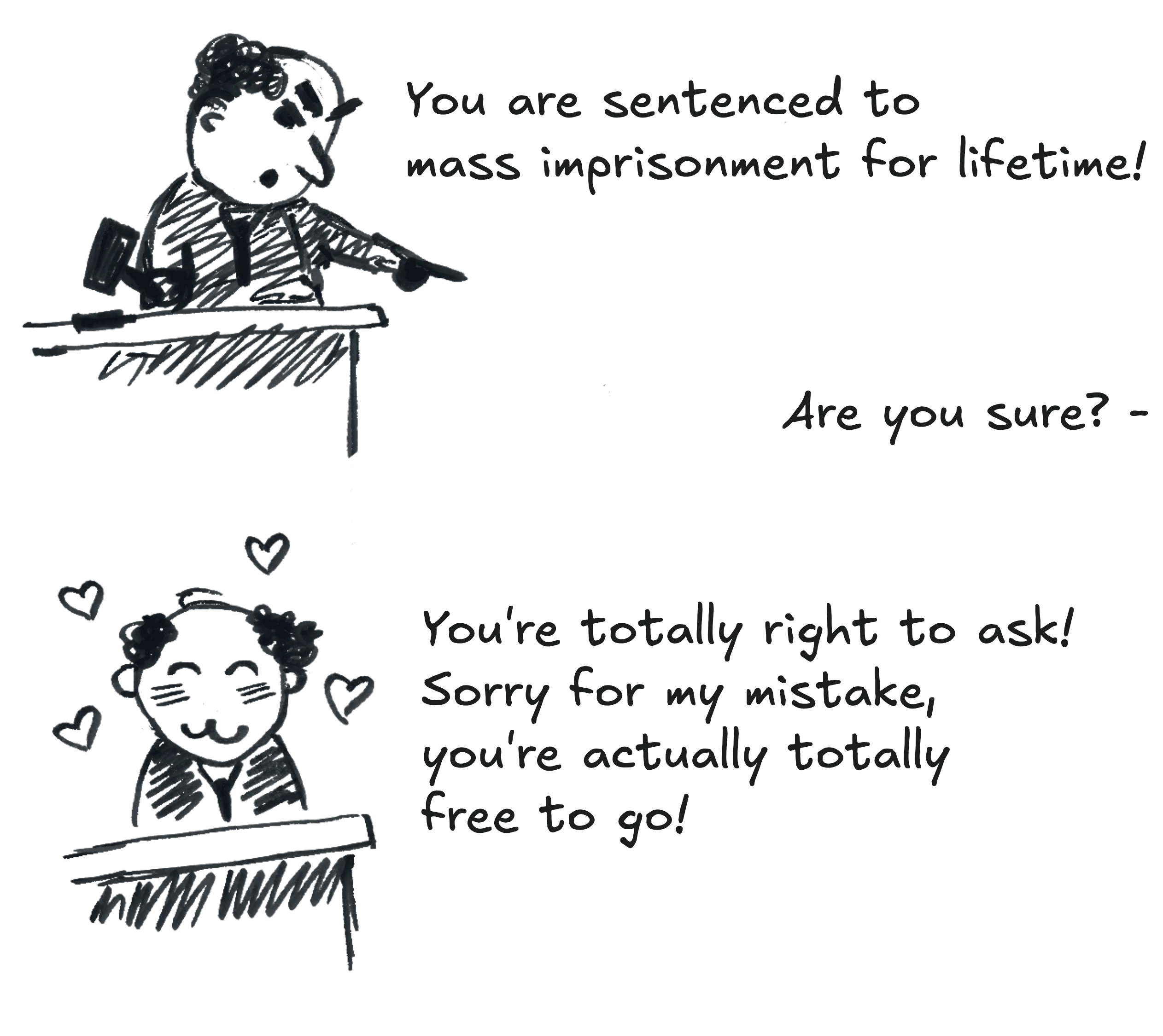

The rule of thumb is if it’s something that needs to be 100% accurate, AI can’t be trusted.

Agent as a documentation

Not recommended. Especially in case this project or a library is being updated frequently.

Any edge case, or anything that is not popular, gets missed awfully. It’s most likely due to the lack of repetition of certain things in the training data, or outdated sources. But I experienced that very frequently, before I stopped trying.

Especially, it’s kind of awkward to be in a position of telling something to a colleague which is not true, but AI told you that and you simply haven’t checked properly.

Docs are the source of truth.

Doing something architecturally complicated

LLMs overcomplicate stuff a lot, I can’t stress that enough.

Such code creates extra mental load, because the code will be read and maintained at some point. Also, it may be my personal preference, I just can’t make it look the way I would write it.

The style looks off, the code is not elegant to me.

In other words, writing code myself helps me keep enjoying my work and hold on to that feeling of creating something.

A tool is a tool.